Event-Driven Partner Integration

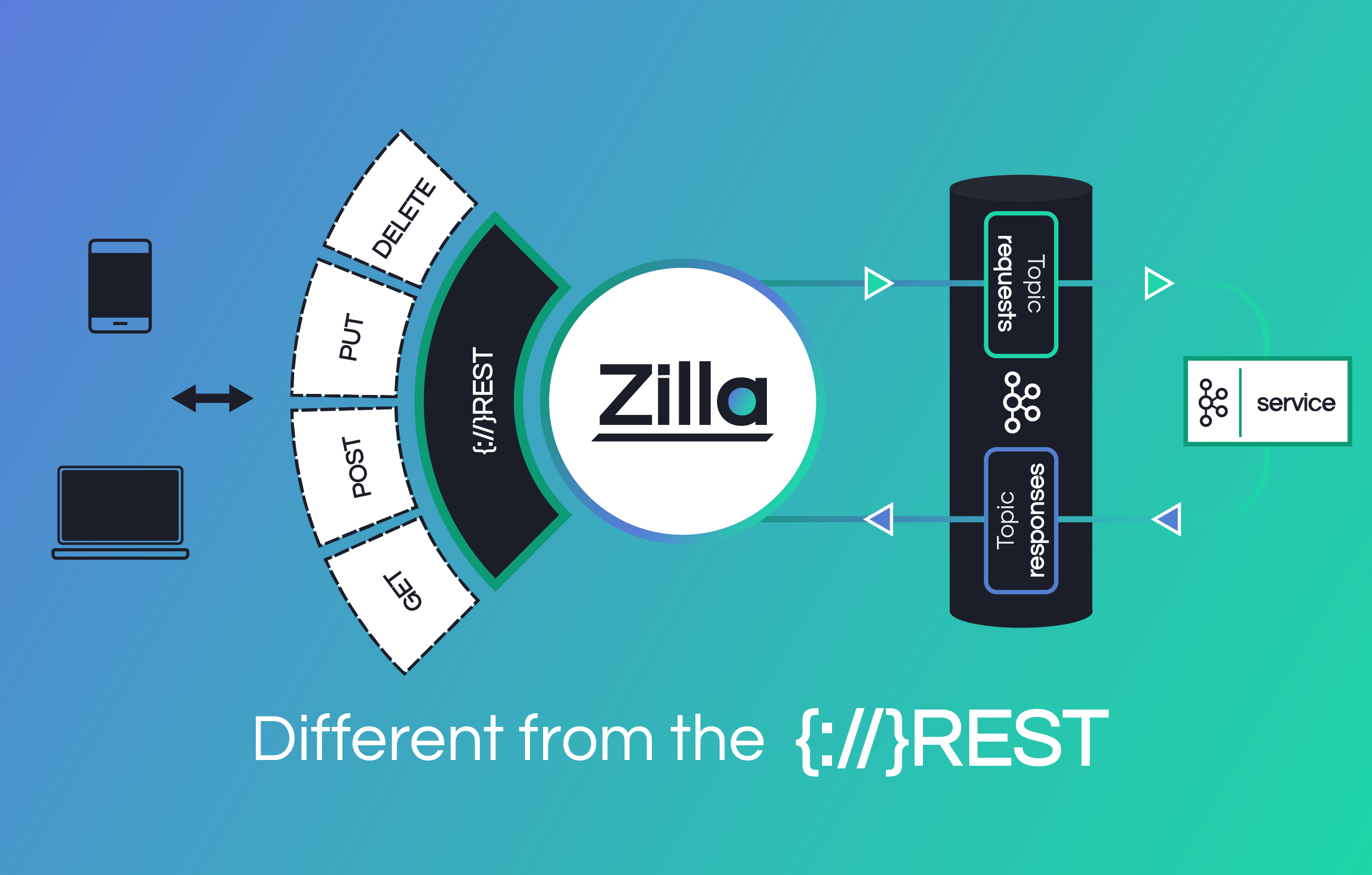

Share Real-time Kafka Data with Partners — without Exposing Your Brokers

Securely expose Kafka event streams to external partners through governed API data products, with built-in authentication, rate limiting, and schema enforcement.

Why sharing Kafka data with partners is harder than it should be

Partners need real-time data. Kafka has it. But giving partners direct broker access creates serious operational, security, and compliance risks.

Security Exposure

Kafka brokers are internal infrastructure — not designed to be internet-facing. Misconfigured partner clients can overwhelm brokers or access unauthorized data.

Months of Lead Time

A typical partner integration takes 3–6 months of infrastructure work before a single event reaches the partner. Business value is delayed by plumbing.

Compliance Gaps

Regulated industries need auditable access, data encryption, and fine-grained controls. Kafka's built-in ACLs don't meet these requirements at the partner boundary.

Middleware Proliferation

Engineering teams build custom consumer services, API layers, auth systems, and rate limiters — duplicating work across every partner integration.

Core Capabilities

Everything You Need for Secure Partner Data Exchange

API Data Products

Package Kafka topics as versioned, discoverable data products with plans, subscriptions, and usage tracking. Partners subscribe to products, not raw topics.

Rate Limiting & Quotas

Define throughput limits per partner, per product. Prevent any single partner from destabilizing your cluster or starving other consumers.

Schema Validation

Enforce Avro, Protobuf, or JSON Schema at the gateway. Producers can't publish invalid data; consumers receive guaranteed well-formed messages.

Data Privacy Controls

Classify fields as sensitive, enforce encryption on produce, and redact data for unauthorized consumers — all enforced at the Zilla gateway layer.

Observability & Policy

Track traffic per partner, monitor error rates, diagnose client issues, and export metrics via OpenTelemetry. Integrated Grafana dashboards included.

Near-zero Latency Overhead

Zilla's stateless data plane adds minimal latency — benchmarked as the most performant Kafka-native proxy available. Critical for latency-sensitive use cases.

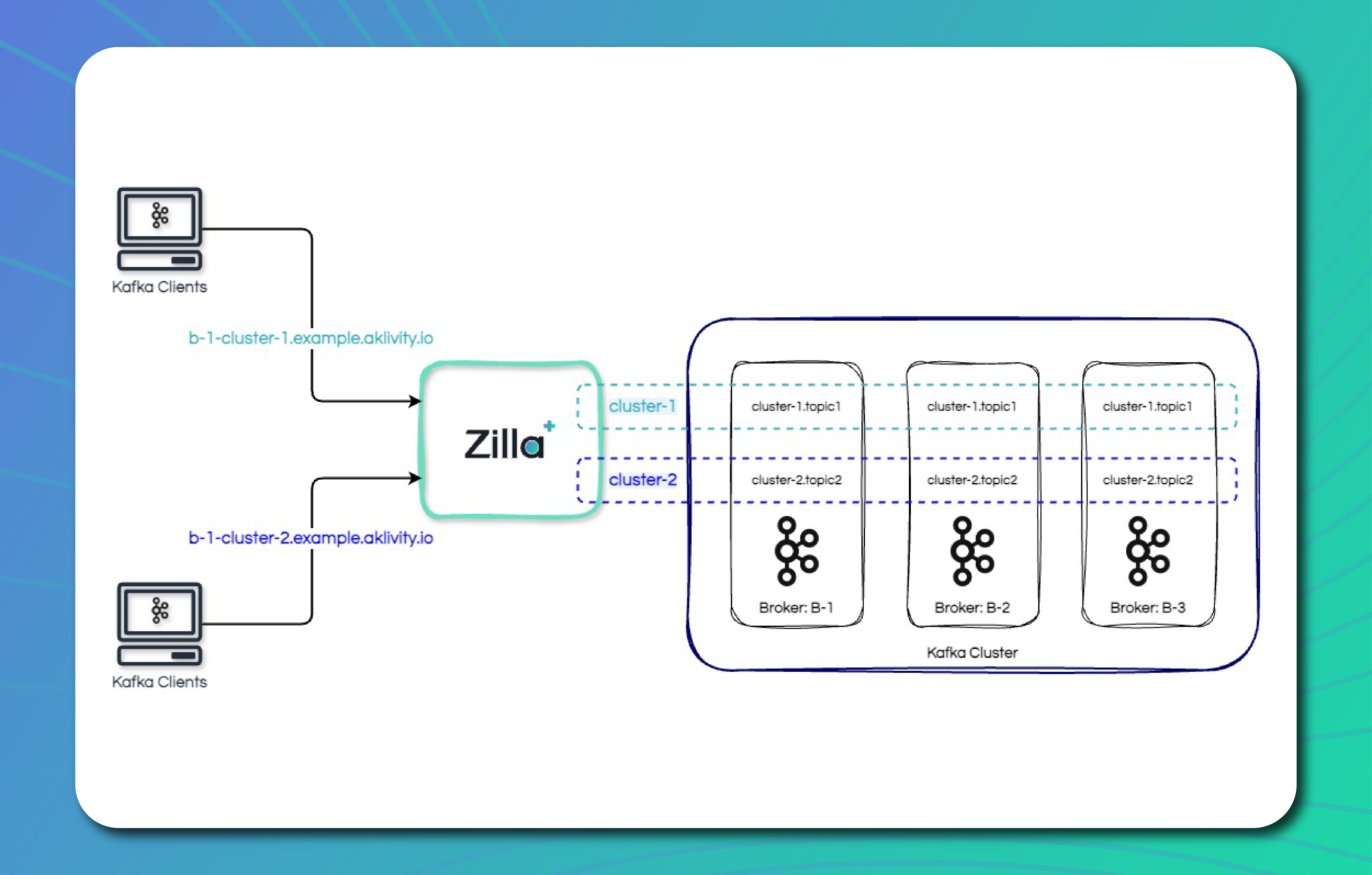

Connect Your Kafka Cluster

Register any Kafka deployment — Apache Kafka, Amazon MSK, Confluent Cloud, Aiven, Redpanda — along with your schema registry. Zilla auto-discovers topics and schemas.

Define an API Data Product

Select topics, attach an AsyncAPI specification (auto-derived or imported), set a version, and define a plan with authentication and rate limiting policies.

Publish to the Catalog

Add the data product to a catalog with visibility scoped for external partners. Partners can browse, read documentation, and request access through the platform.

Partners Subscribe & Connect

Partners register their application, subscribe to the data product, and receive API keys. They connect using standard Kafka clients pointed at the Zilla-managed endpoint.

Monitor, Govern, & Iterate

Track partner usage, review policy compliance, rotate credentials, and evolve data products with versioning — all from a single management console.

Zilla vs. Building it Yourself

DIY Approach

- Custom auth service per integration

- Complex rate limiting set up

- Separate schema registry + consumer logic

- Ticket-based partner onboarding process

- Network segmentation + proxy layer

- 3-6 months time to integration

- Per-partner ongoing maintenance

Zilla Platform

- Built-in API keys + mTLS for partern auth

- Seamless per-partner, per-product quotas

- Gateway-level schema validation

- Self-service parter onboarding

- Partners never reach brokers

- Time to first integration: days to weeks

- Centralized platform

Trusted by leading data-driven organizations

“Zilla Plus gave us secure, publicly reachable endpoints for our Amazon MSK cluster without compromising our private network — and dramatically reduced the lead time we used to spend building middleware layers. With its extensive protocol support and deep AWS integrations, we're confident Zilla is a one-stop solution for all of our external MSK integration needs.”

Read Case Study

Related Resources

Ready to Simplify Partner Integration?

Stop building custom middleware for every partner. Start sharing Kafka data securely in days, not months.