Zilla Platform 1.3: Endpoints, Policies, Self-Serve Certs, and More

Introducing Endpoints, Policies and self-serve mTLS cert management.

Two Ways In: API Data Products and Endpoints

Zilla Platform offers two access models for a Kafka cluster. They share the same governance backbone — policies, identity, schema enforcement, observability — but they solve different problems.

API Data Products package specific Kafka topics as discoverable, subscribable streams. A consumer finds a data product in the catalog, subscribes, and connects with credentials scoped to that product alone. Schema validation, authentication, and rate limiting are enforced at the gateway. This is a data-first model, built around the outcome of "give this team or partner controlled access to this specific stream." API Data Products are the right choice when data needs to be productized for internal consumption, exposed to partners, or delivered over non-Kafka protocols where Kafka-native access isn't appropriate.

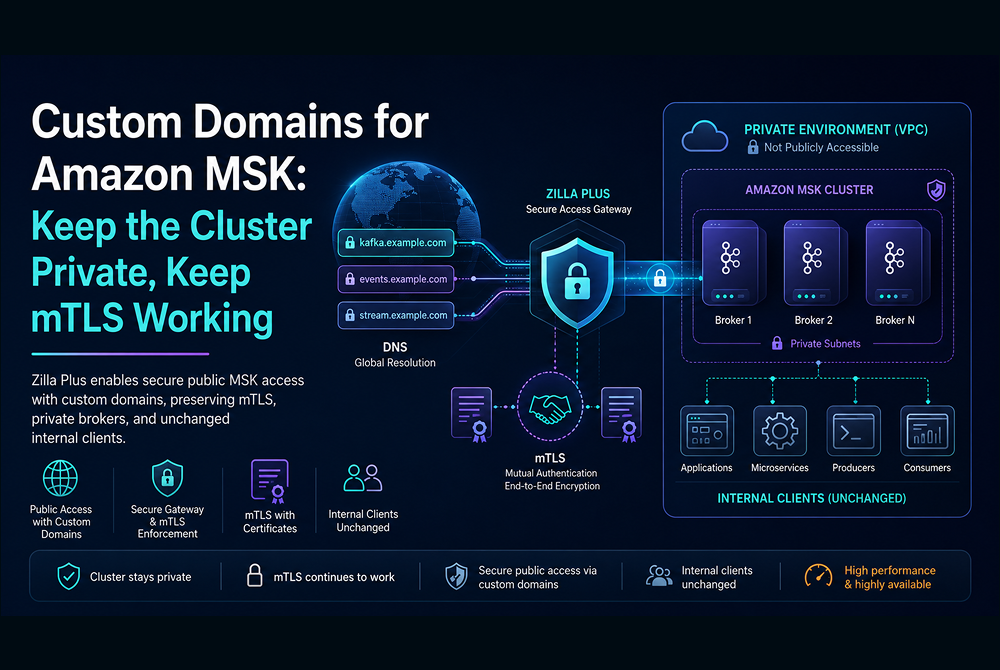

Endpoints expose the Kafka cluster itself as a governed entry point. A native Kafka client connects to an Endpoint at a dedicated hostname:port and speaks standard Kafka protocol — producing and consuming across topics, joining consumer groups, running Kafka Streams workloads, using admin APIs. The client authenticates with mTLS, and its certificate CN maps directly to a Kafka ACL principal. Policies attached to the Endpoint enforce organizational rules at the protocol level. This is a cluster-first model, built around the outcome of "let this team or service use Kafka directly, but with guardrails the platform team controls." Endpoints are the right choice for existing Kafka-native applications, stream processing workloads, platform-team operational access, and any service that needs the full Kafka surface area.

The distinction comes down to what the consumer needs. A curated stream delivered as a product — API Data Product. The Kafka cluster itself, governed — Endpoint. Both are self-serve. Both are fully governed. And both can coexist in front of the same cluster.

Secure Kafka Access, Fully Governed

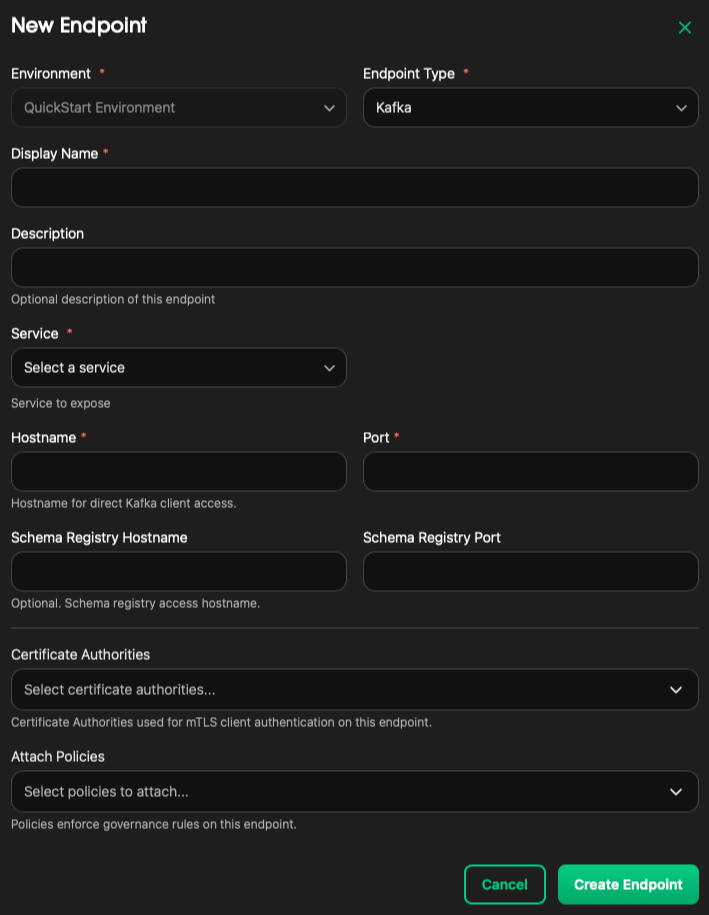

Endpoints give native Kafka clients a direct, governed path into your Kafka services. Each endpoint is reachable via a dedicated hostname:port on the Zilla Gateway.

Each Endpoint is a fully governed entry point. Attach Certificate Authorities to enforce mTLS, apply Policies to control what clients can and can't do, and optionally surface a Schema Registry address alongside the broker, all in one configuration.

mTLS, Simplified

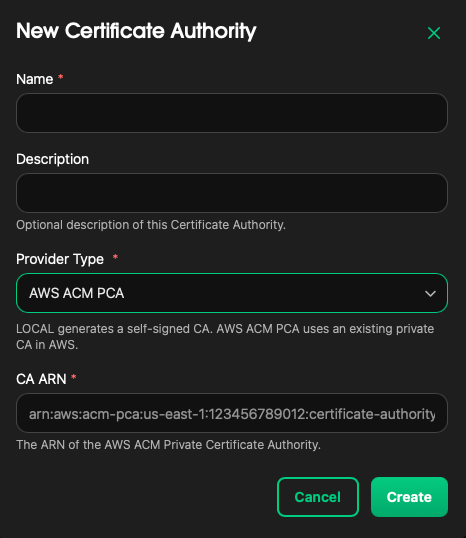

Setting up mutual TLS used to mean managing your own PKI or wiring up AWS ACM PCA by hand. In 1.3, Certificate Authorities are a platform primitive.

Register a CA once, either a local CA using your existing PEM certificate or an AWS ACM Private CA by simply providing its ARN, and the platform takes care of the rest. The Zilla Gateway validates every incoming client connection against your registered CAs in real time. No client presenting an untrusted certificate gets through.

Self-Serve Client Certificates

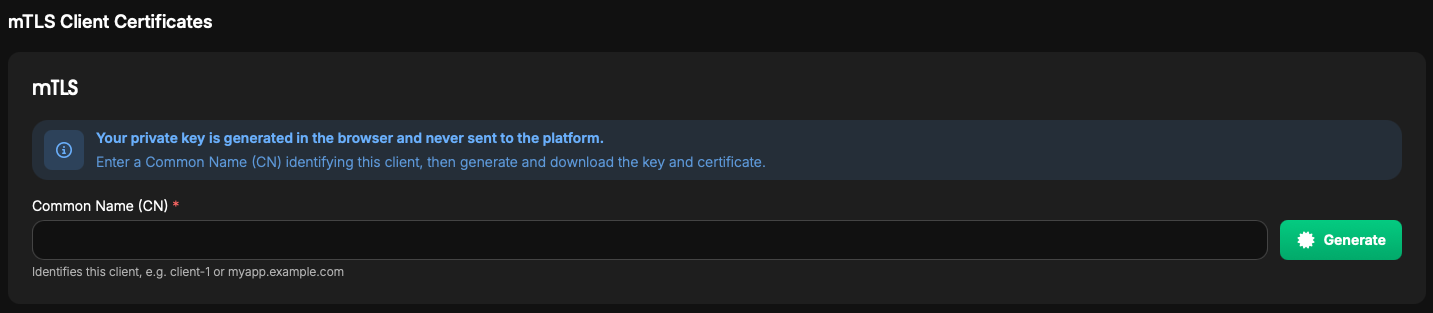

Once mTLS is enabled on an endpoint, any authorized team member can issue client certificates directly from the console without any PKI expertise required.

Assign a Common Name (CN) to identify the client, click Generate, and the signed certificate and private key download instantly. The private key is generated entirely in the browser and never touches the platform, so your security posture stays intact.

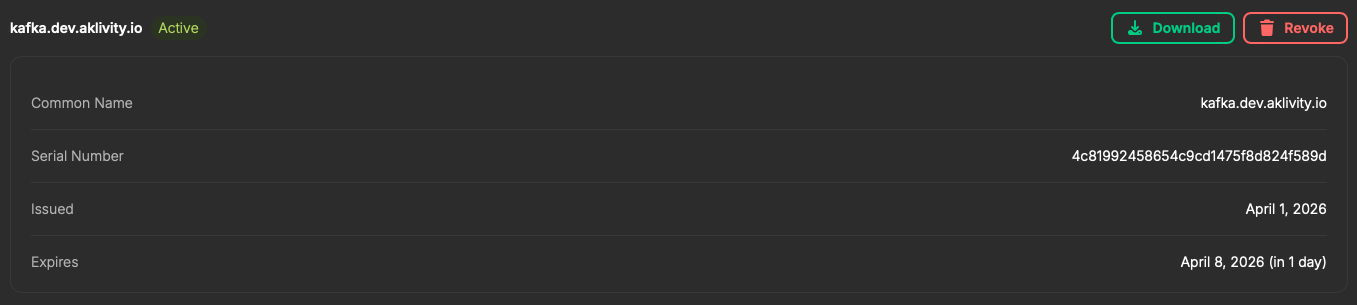

Every issued certificate is tracked with its CN, serial number, issue date, and expiry. When a client is decommissioned or a key is compromised, revocation is a single click that immediately blocks that client from reconnecting.

For teams running mTLS end-to-end, the CN maps directly to the Kafka principal in your ACLs. The Zilla Gateway presents that same identity to the broker, so access control is consistent from the client all the way to Kafka with no gaps.

Policies That Actually Enforce

Attaching a Policy to an endpoint means every request flowing through it is governed by the Zilla Gateway in real time at the Kafka protocol level. Clients cannot route around it.

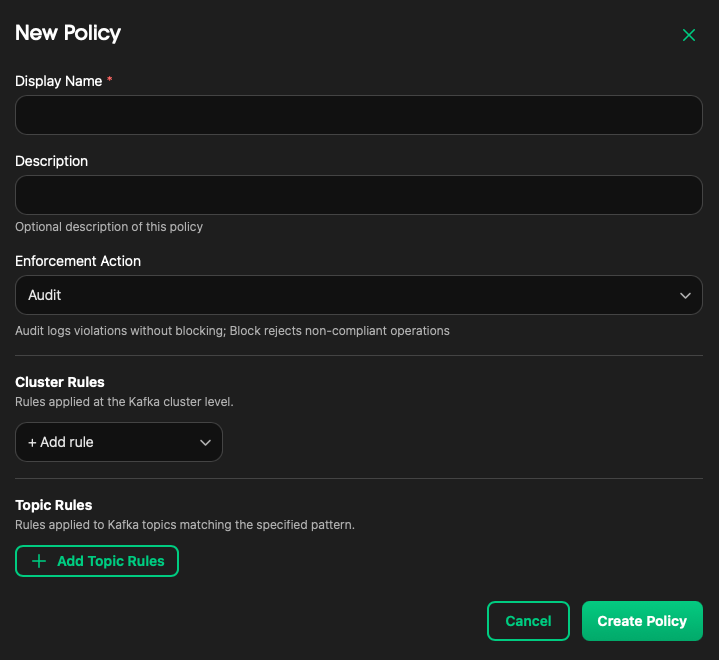

Choose between Audit mode to log violations without disrupting traffic, or Block mode to actively reject non-compliant operations at the gateway.

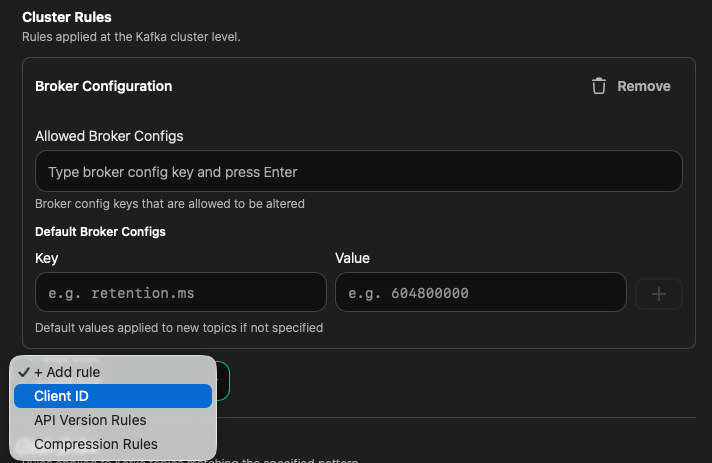

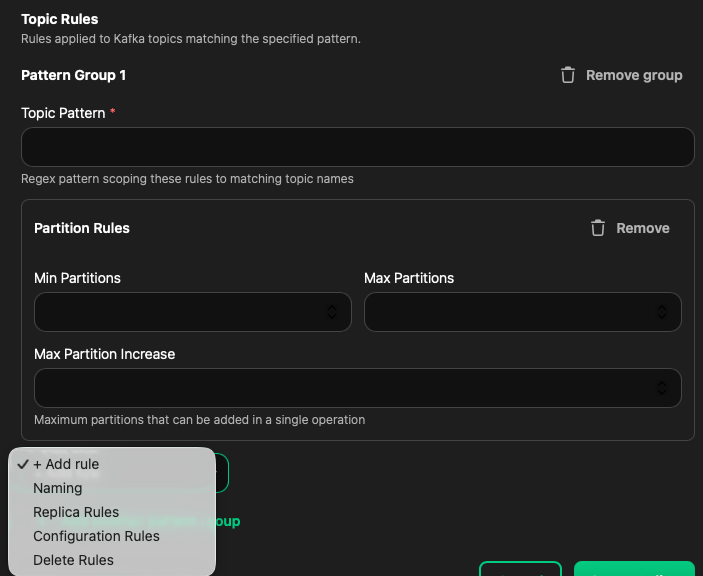

Apply cluster-level guardrails, topic-level naming conventions, configuration bounds, whatever your organization requires.

The same policy framework applies whether clients are connecting via API Products or directly through an endpoint. Consistent governance, across the board.

Getting Started

What you need:

Get up and running with the Zilla Platform Quickstart:

docker compose -f oci://ghcr.io/aklivity/zilla-platform/quickstart up --wait && \

docker compose -f oci://ghcr.io/aklivity/zilla-platform/quickstart/env up --wait

Setup flow:

1. Create a Policy

Policies > New Policy

Set Enforcement Action (Audit or Block)

Add Cluster and/or Topic Rules

|

2. Create an Endpoint

Environments > [environment] > Endpoints > New Endpoint

Set hostname and port

Attach the Policy

|

3. Connect your Kafka client

Point to the endpoint hostname:port

Trigger a non-compliant operation to verify enforcement

or follow along via the walkthrough:

Zilla Platform 1.3 makes governed, secure Kafka access straightforward. Endpoints, Policies, and self-serve certificate management are now part of the same platform workflow your teams already use.

No custom scripts, no manual cert pipelines, no one-off configurations. Get started with Quickstart and explore the full documentation below.

Endpoints / Certificate Authorities / Client Certificates / Policies

Ready to Get Started?

Get started on your own or request a demo with one of our data management experts.