The (Continued) Rise of Event-Driven Architectures

Event-driven architectures are no longer a "nice to have" but a "must have."

Considering the ever-growing scale and urgency of modern digital use cases, event-driven architectures are no longer a "nice to have" but a "must have". Consumers today demand engagements that span multiple devices, want their data to be instantly available, and expect everything to work around the clock.

In response to these expectations, applications have increasingly moved away from a more traditional REST or mesh-based approach towards event-driven architecture (EDA). This growing need for EDAs has driven the popularity and success of solutions such as Apache Kafka, which remains one of the most widely used event streaming services. And there's no sign of the EDA trend slowing down.

In an event-driven architecture, or EDA for short, your application is made up of multiple microservices. Microservices inside an EDA communicate via a message broker, which is the intermediary that decouples services and enables message-based, or event-based, communication. This strategy offers two key advantages. First, your microservices can produce and consume events asynchronously, which helps make your entire application more efficient and scalable. Secondly, since microservices don't have to care about how other microservices are implemented, each component of your application is decoupled. This makes it far easier for you to build and deploy each microservice independently of the others.

If you're looking to build your own application, you must consider whether it makes sense to adopt EDA, which involves thoroughly understanding the pros and cons of EDA as well as when it makes more sense than a REST or mesh-based design. By the end of this article, you'll have a good enough picture of EDA to decide whether it's right for you. In addition, you'll also learn about the challenges you might face when using EDA, the types of applications that are possible with EDA, and specific design flavors of EDA like pub/sub and event streaming.

What Is Event-Driven Architecture?

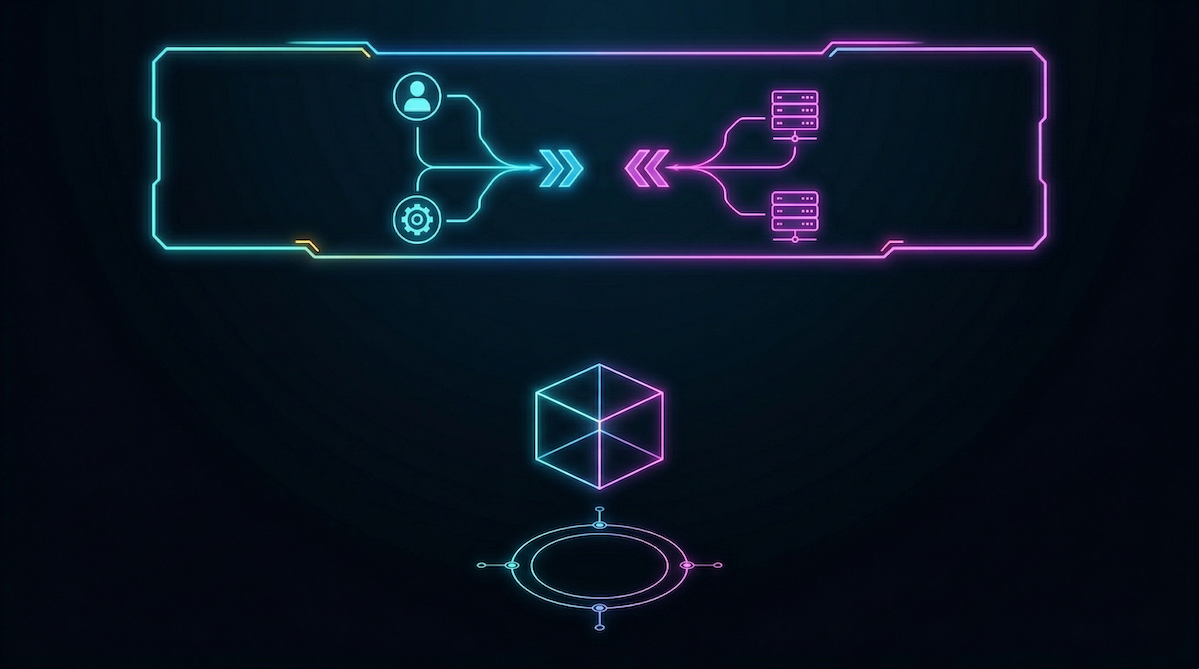

Let's take a closer look at event-driven architectures with the help of the following diagram:

Event-driven architectures are built upon three foundational concepts: microservices, events, and event brokers.

A microservice is an abstraction that represents a single component or module of your application. Together, multiple microservices form a complete application or product. The entire diagram above depicts a single application, which is composed of microservices A through D.

An event contains information about something that happens or changes within a microservice. Events are usually sent and received in a format that microservices can easily process, such as JSON.

Instead of having microservices send events directly to other microservices, applications typically use an event broker, which is a central entity that routes events to their correct destinations. An event broker can have filtering rules that help it determine which events to send and where they're sent.

Events can be sent to 0, 1, or multiple destinations. This is illustrated in the diagram via events A, B1, and B2:

- Microservice A sends event A. 2 microservices, C and D, receive event A.

- Microservice B sends event B1. 1 microservice, D, receives event B1.

- Microservice B sends event B2. 0 microservices receive event B2.

While this isn't illustrated in the diagram for simplicity, microservices can act as both producers and consumers of events. For example, microservice C could produce an event, C1, that gets routed to microservices A and B.

Event-Driven Architecture vs. Traditional REST/Mesh-Based Architectures

REST or mesh-based applications are types of service-oriented architecture (SOA), which has become highly popular in software development since its introduction in 2000. Now that EDA has emerged as a viable and often better alternative, developers like to compare and contrast the two. While there are key differences between them, you'll likely use a mix of both EDA and REST or mesh-based models in a real-world application. The following sections highlight some of the key differences between the two.

Asynchronous Nature, Loose Coupling, and Lower Change Overhead

When a microservice calls a REST API, it must know the details of that API, such as its path location and parameters. This means that microservices must know how the other microservices' APIs are implemented, leading to tight coupling throughout the system. Developers working on such a system may experience higher change overhead because they must consider how one change could potentially affect multiple microservices.

In contrast, EDA microservices are loosely coupled, and each microservice is standalone and operates asynchronously. This means that you're encouraged to design your microservices so that they don't depend on each other. This sort of loose coupling helps reduce the risk that changes to a single microservice will have unintended consequences on another microservice. This also leads to lower change management overhead.

Better Real-Time Performance and Improved Agility

REST APIs are intrinsically synchronous because they rely on the HTTP protocol, which is request-response based: when microservice A invokes REST microservice B, A must wait for the response from B before it can continue with other tasks. Overall, this can lead to poorer application performance since many of your threads may be blocked waiting for a response.

Conversely, EDAs are asynchronous: the broker and its underlying protocol enable microservices to proactively "push" and "subscribe" to data without receiving or making explicit requests. So, EDAs don’t have to wait for a response to one task before continuing with another, and this generally leads to improved performance compared to REST APIs.

EDA also allows your software teams to move faster. Microservices are small and independent, so you can build, test, and deploy much faster compared to a monolithic application. Organizationally, it also helps you keep your team sizes smaller, since you can logically assign each microservice (or collection of microservices) to a single team of developers.

Increased Scalability and Extensibility

It can be harder to scale REST architectures because of tight coupling among microservices. Since components within an EDA architecture work asynchronously and are only loosely coupled, it’s easier to selectively scale the microservices that are seeing the most traffic. In contrast, with a REST architecture, you may have to scale up the entire application because of its synchronous nature.

EDA often scales better in terms of conserving your costs and resources as well. For example, you might consider going serverless so your developers don’t have to worry as much about tedious server maintenance tasks, as many cloud platforms handle things like bad host replacement for you. Many cloud platforms also have a pricing model where you pay only for what you use, which can be significantly cheaper than owning your own servers.

In addition, REST architectures are generally less extensible because changing the API could potentially break clients that are currently using it.

What Are Some Challenges That EDA Presents?

So far, this article has painted EDA in a purely positive light. You should note that EDA also comes with some technical nuances that can be challenging to deal with.

Data Consistency

With larger systems, the problem of data consistency becomes more apparent. If two data values in different locations are identical, then the data is considered consistent. Consistency is hard to achieve in EDA because of its asynchronous nature. For example, you might have microservice A modifying a data value and microservice B retrieving that same value a couple of milliseconds later. Does B get the old value or the updated value? In other words, is the system eventually consistent or strongly consistent?

If you decide to use EDA, be mindful of your application's data consistency requirements. Certain critical functionalities, such as handling payments, demand strong consistency. Eventual consistency would suffice for other features, such as counting the number of likes or comments on a post.

Increased Complexity

EDA systems can also be highly complex due to the sheer number of microservices communicating with one another via events. However, there are also some other ways EDA can increase complexity. First of all, while the asynchronous nature of EDA is generally better for performance, it's inherently more difficult for developers to wrap their heads around parallel processing. In addition, trying to trace a request through a fully asynchronous system can be very difficult; you'll likely have to dig up logs from multiple microservices.

Payload Size Restrictions

There's a limit to how much information you can send in a single event payload. If your event payloads exceed this limit, you may have to find ways to compress them or split them into chunks. Note that you probably don't want your payloads to become too large, as this can drastically hurt your application's performance.

Handling Duplicates (Idempotency)

With any EDA system, there is the possibility of duplicate events. For instance, Kafka and many other event streaming platforms offer what's known as at-least-once delivery, meaning that messages may be duplicated but not lost. This makes designing and implementing your microservices more challenging, since you'll have to ensure idempotency in handling duplicate events.

What New Opportunities Does EDA Create?

EDA paves the way for special opportunities and use cases that other traditional architectures have a hard time servicing.

Proactive Monitoring Solutions

With EDA, you can build proactive monitoring solutions that can deliver logs, traces, and other application telemetry data in real time. In fact, EDA is the perfect architecture for these types of applications because telemetry data is often generated in response to application events or API calls.

Enhanced Connectivity for IoT

IoT is a field that demands low latency and fast response times. With the rapid increase in the amount of data that modern IoT solutions must process, EDA makes it much easier for you to scale up the number of connections without affecting performance.

Real-Time Content Recommendations

Anything that requires dynamic, real-time responses is a good candidate for EDA. Content recommendation is one such use case. For example, a system may produce relevant video recommendations based on a constant stream of incoming event data that represents videos users have watched so far.

Better Data Analytics for Marketing

Marketing teams are able to utilize EDA systems to respond in real time to data analytics. For example, if a particular marketing campaign is driving more click-throughs, teams are able to see this data in real time and double down on the campaign.

What Are the Most Common EDA Patterns Today?

Within EDA, there are typically two common architecture patterns: publish-subscribe (pub/sub) and event streaming. Consumers in EDA also have a few common methods of processing received events.

Publish-Subscribe (Pub/Sub)

In the publish-subscribe model, events are organized in abstractions called topics. A central entity keeps track of which hosts are subscribed to which topics. When an event is published to a topic, the central entity distributes the event to every host that's subscribed to that topic.

In this diagram, Publisher1 and Publisher2 push messages m1 and m2 to Topic 1. Immediately, messages m1 and m2 get sent to each of Topic 1's subscribers. Subscriber1 is the only subscriber to Topic 1, so it receives both messages.

Also, Publisher3 pushes the message m3 to Topic 2. Immediately, m3 gets sent to each of Topic 2's subscribers. This means that both Subscriber2 and Subscriber3 receive m3 simultaneously.

Event Streaming

In the event streaming model, there is a central stream or log to which events are written. This stream is typically divided into partitions, where each partition is somewhat analogous to a topic in the pub/sub model. Instead of having clients subscribe to the stream, a client can begin reading from any position in the stream.

In this diagram, note that the message ordering within each partition is preserved. For example, in Partition 1, message 101 arrived first, followed by 102 and 103. Client1 starts to read from Partition 1, and is currently reading message 101. This means that clients in an event streaming model must keep track of where they're currently reading from and are also responsible for advancing their position in the stream.

Similarly, Client2 is currently reading message 202 from Partition 2, and Client3 is reading message 303 from Partition 3. Messages aren't automatically deleted from the stream, even after they've been processed by a client. So, it's possible to replay events. Most streams will have some sort of time-to-live (TTL) setting, where messages past their TTL will be marked for deletion.

Event Processing Patterns

To actually process events, clients follow a few general patterns as well:

- Simple event processing (SEP): In SEP, clients immediately take action when they receive an event. There is little to no extra processing or filtering of messages.

- Complex event processing (CEP): In CEP, clients wait for multiple events that fulfill certain criteria to take place before taking an action.

- Pub/sub processing: In pub/sub processing, clients must subscribe to certain topics before being able to receive messages from those topics.

- Event stream processing (ESP): In ESP, clients read from real-time streams under the event streaming model discussed in the previous section. Clients can start reading from the stream at any position.

Apache Kafka's Role in EDA

Apache Kafka is one of the most popular open source event streaming platforms. Thousands of companies use Kafka to power use cases ranging from financial transaction processing to log aggregation. The widespread use of Kafka plays a huge role in the success of EDA, and there are a few reasons why so many trust Kafka for their event streaming needs.

Highly Performant

Scale is no problem for Kafka. In fact, the larger your data, the more likely it is that Kafka is the best option. Similar to the process illustrated in the earlier diagram on event streaming, Kafka uses topics and partitions to divide messages into logical groups. This allows for guaranteed ordering and for many clients to be able to read from different partitions of the Kafka stream simultaneously.

Supports Backpressure

When using Kafka, your clients are responsible for pulling data. This is typical of an event streaming pattern. This helps support backpressure throughout your system, since your clients will only pull data from the partition when they have the resources to do so. You'll never run into a situation where you experience bottlenecks with your clients because brokers are overloading them with new events.

Resilient

Kafka is a distributed system. Data in a Kafka cluster is distributed across multiple nodes, which are also called brokers in Kafka. In addition, Kafka replicates your data so that multiple copies exist across various brokers. This adds resiliency and fault tolerance in scenarios where a particular node goes down.

Unifies Event-Streaming Architecture

Kafka can be thought of as an "all-in-one" system that unifies data messaging, storage, and stream processing. This makes it a more enhanced solution compared to something like a message queue, which is only responsible for passing messages on to clients.

On a larger scale, Kafka supports the shift away from traditional practices such as building cubes for data extraction. Previously, memory and performance limitations meant it was necessary to load specific sections of large data lakes into separate “cubes” for easier analysis.

Today, databases use a columnar format and adopt what’s known as a massively parallel processing (MPP) architecture, making them much more powerful. Enter Kafka, which then acts as the glue between these powerful databases and your applications that need streaming data. Systems like Kafka make this type of unified streaming architecture possible.

Conclusion

This article introduced event-driven architectures, from the basics of microservices and events to specific EDA design patterns. EDA can be an excellent paradigm to adopt if you're looking to improve team agility, decouple your software systems, and achieve large scale. Indeed, compared to more traditional REST and mesh-based architectures, EDA tends to offer better performance, lower change overhead, and better overall scalability.

Of course, this isn't to say that EDA doesn't have its drawbacks. There are numerous technical nuances that you need to consider when adopting EDA, such as problems relating to data consistency, increased complexity, and idempotency. In addition, you'll have to consider specific EDA design approaches, such as pub/sub and event streaming. Kafka is one such "all-in-one" event streaming solution that can help you address some, if not all, of these concerns. Hopefully, you'll find the information here useful when deciding whether or not you should adopt EDA for your next great data streaming application.

Ready to Get Started?

Get started on your own or request a demo with one of our data management experts.